Faster caterpillars

Culture change and AI

I’m writing a piece on AI and organisational culture, but I can’t write that one until I’ve said a few things in this one.

The London Office

Once we were working with a multinational company in its London office on a digital innovation project. It felt off from the start. People turned up late to meetings. Slide decks ran to 200 pages. Decisions seemed to evaporate in the room. How did this place actually work?

Our main contact – a “lifer” who’d joined from university – swung between enthusiasm and despair from one meeting to the next. One day they loved our idea, the next they blamed us when the wider group didn’t embrace it. We couldn’t work out what was going on.

In desperation, I called a contact who had worked there for a year – long enough to navigate the culture, but not long enough to lose their outside perspective.

“You’re fine,” they said, and explained.

At this company, decisions emerged through consensus; people signalled their support by showing up. The 200-page decks? Boxes of evidence – but only the first slide ever mattered. It had to tell the whole story. One slide. One story. And meetings started ten-to-15 minutes late because everyone was booked back-to-back in a building the size of a small town. They’d stopped noticing everything started on almost exactly 10 minutes after the calendar said.

Their advice: “Plan your presentation as a one-slide story with a 199-page appendix. Deliver it in 45 minutes. The decision will arrive an hour later, once they’ve connected.”

We followed their suggestion. Everything was fine after that.

That’s culture. They couldn’t articulate it explicitly, but you could see it in how they worked. “How things actually get done around here.” More the outcome of the micro-decisions people made every day than a set of rules or a policy.

The Gap

Three years into the generative AI wave, nearly nine in ten organisations report using it. Six percent see meaningful bottom-line impact, according to McKinsey research (Nov 2025 though I’d take McKinsey’s data and definition of “meaningful impact” with salt).

The gap isn’t technical. The tools work. The pilots impress. The problem is that most organisations are trying to deploy AI into cultures that reject it – and they don’t even know it. It’s not resistance so much as habit – ingrained ways of deciding and working that break the pilots or quietly reject them.

As Wharton professor Ethan Mollick says:

The challenge isn’t implementing AI as much as it is transforming how work gets done. And that transformation needs to happen while the technology itself keeps evolving.

In his book Co-Intelligence, he goes further:

Without a fundamental restructuring of how organizations work, the benefits of AI will never be recognised.

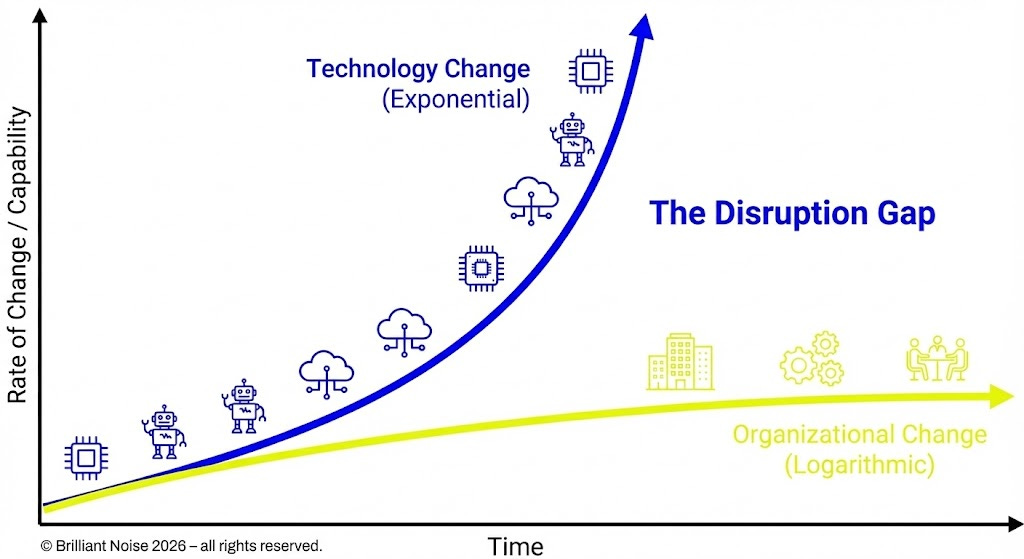

There’s a name for this mismatch. Scott Brinker (via Neil Perkin) calls it Martec’s Law: technology changes exponentially; organisations change logarithmically. Structures, behaviours, culture – they all move slower than the tools.

That’s the “culture lag” problem. And it’s eating executives’ AI transformation ambitions for breakfast.

What culture actually Is

The late Edgar Schein’s classic definition: culture is “a pattern of shared basic assumptions that the group learned as it solved its problems of external adaptation and internal integration… taught to new members as the correct way to perceive, think, and feel.”

Which manifests as three layers: artefacts (tools, rituals, language), espoused values (”innovation”, “experimentation”), and basic assumptions – what people really believe about risk, control, status, and technology.

AI adoption runs into all three.

An organisation’s AI artefacts might look impressive. Its stated values no doubt include “embracing innovation and learning”. But the basic assumptions – the invisible ones – those determine whether people actually experiment, surface failures, or quietly paste prompts into personal ChatGPT accounts where no one can see.

Faster caterpillars

John Hagel argues that most “digital transformation” is actually optimisation: applying technology “to do what the company has always done faster and cheaper.” He warns this isn’t transformation unless the result is “a butterfly that would be unrecognizable relative to the caterpillar.”

Organisations are making themselves “faster caterpillars”, as MIT Sloan’s George Westerman memorably put it. The image was too irresistible – I had to let Nano Banana Pro have its way with it.

Ajay Agrawal and colleagues give an economic framing: real transformation comes when AI-driven prediction supports new systems and decisions, not just better old ones. “Those new decisions replace rules.”

To Agrawal, AI “point solutions” – AI to write emails, summarise meetings, generate first drafts – are legitimate productivity gains, but they’re not transformations. They’re caterpillars on wheels.

Transformation means reimagining value creation, structure, and culture. It means changing the questions, not just speeding up the answers.

Richard Rumelt, in The Crux, is direct:

Organization and culture are strategic issues… When they impede efficiency, change, and innovation, they become strategic issues. Grand statements… that ignore festering organizational problems are part of the problem.

What has to change

The Nokia story – the end of its mobile dominance one – is instructive. Research into its decline found that a “climate of fear” and performance-driven culture suppressed bad news. Temperamental leaders created an environment where middle managers were afraid to disappoint. The company’s strategy wasn’t the problem – its culture was. People stopped telling the truth.

If people fear surfacing risks, failed experiments, or uncomfortable truths about AI, the organisation learns nothing. The organisation gets polished presentations about successful pilots while the real challenges fester unseen.

For leaders, this means unlearning control and perfectionism. The assumption that you need to understand everything before approving it. The instinct to centralise AI into a “Centre of Excellence” where experts can manage it safely – and where it will quietly die.

Our organisations are built around the limitations of human intelligence – the only form we’ve had available until now. AI changes the economics of expertise, the logic of delegation, the meaning of “span of control”. Leaders who try to understand AI by having it explained to them in a meeting are missing the point. You have to use it. Marinate in it. You can’t lead a culture of experimentation from a position of abstinence.

Experimentation is a capability

The cultural capability that matters most for AI adoption is experimentation. The kind where failure is data, not disgrace.

Traditional corporate structures are bad at spotting granular AI use cases. The people who actually know where the friction is, where the repetitive work lives, where judgement gets wasted on routine decisions – they’re not in the strategy team. They’re doing the work.

The best approach is bottom-up discovery with top-down permission. Give people the tools and the safety to experiment. Reward the ones who find transformational uses.

But the harder challenge is making it safe to use AI openly. Anthropic’s research found that 69% of professionals mentioned stigma around using AI at work. People hide how they work because they’re afraid of being seen as cheating, or lazy, or replaceable. “I don’t tell anyone my process because I know how a lot of people feel about AI.”

Cultural norms – not policies – govern whether AI is openly used or driven underground. If people can’t talk about how they’re using these tools, the organisation can’t learn from what’s working. The organisation can’t build shared practices. It gets a thousand individual experiments, none of them connected.

Agency, not automation

There’s a design principle buried in the research that matters more than most realise: giving people the ability to use and shape AI increases their likelihood of using it.

When people feel they have agency – that AI is a tool they control, not a system that controls them – adoption goes up. When they feel overruled or surveilled, they resist. Human-centred AI culture means people feel empowered, not replaced.

This extends to how you frame the whole endeavour. “AI transformation” sounds like something that happens to people. “Building AI capability” sounds like something people do. Change imposed creates resistance. Change owned creates momentum.

Most executives expect AI to drive substantial transformation within three years. Most of them are currently emphasising tactical efficiency over innovation. They want butterflies, but they’re investing in faster caterpillars.

The gap between expectation and approach is culture. It’s not that leaders don’t understand AI’s potential – it’s that their organisations aren’t built to realise it. The structures, incentives, and assumptions that made the company successful are now the barriers to the next phase.

AI will combine with new ways of working to create a wave of change that will finally break through the ancient coastal defences of the twentieth-century corporation. But only if the culture allows it.

Culture isn’t something you install or upgrade. It’s the residue of a million decisions. It’s how things actually get done around here.

You can design an organisation the way you design a garden. What you aim for and what you get depend not on a blueprint but on intention and relationship – on how you work with a complex and challenging ecosystem. The results can be an awkward mess, a patchy success, or a small piece of paradise on earth – sometimes all three at different times.

In gardens, the ecosystem is nature. In organisations, it’s culture.

AI won’t transform your organisation. Culture will.

That’s all for this week.

If you read this far, here is a medal.

Antony

Great post! Read a fine book that came out last year - There's Got to Be a Better Way by

Nelson P. Repenning and Donald C. Kieffer. Not about AI but about how management's everywhere usually have no real idea of how work actually gets done within their own organisations - lots of good stuff on dynamic work design as well the universal use of "workarounds" - where people end up deviating from how they are supposed to do things just get it done - although a short term fix, it just stores up trouble for later......AI adoption seems to be falling into this very trap.

"The fix-it-all-at-once change strategy has several problems and rarely succeeds. It overwhelms the system with work and, perversely, often pushes people deeper into expediting and other workarounds. When people do carve out the space to make the required changes, the modifications suggested by senior leaders are typically disconnected from how the work is actually done. For example, we worked with one manufacturing plant that, in an effort to reduce eye injuries, required everyone to wear safety glasses. This change worked great except when work was done in hot, humid areas, where the glasses tended to fog. Technicians were now stuck between a proverbial rock and a hard place: follow the rule to the letter, risk tripping, and do the task with obscured vision or violate the rule. Mandating one-size-fits-all changes often forces people doing the work to make additional, often surreptitious workarounds. Not surprisingly, putting those who do the work in an impossible bind does little to increase their motivation for participating in change." (Nelson P. Repenning and Donald C. Kieffer, There's Got to Be a Better Way)